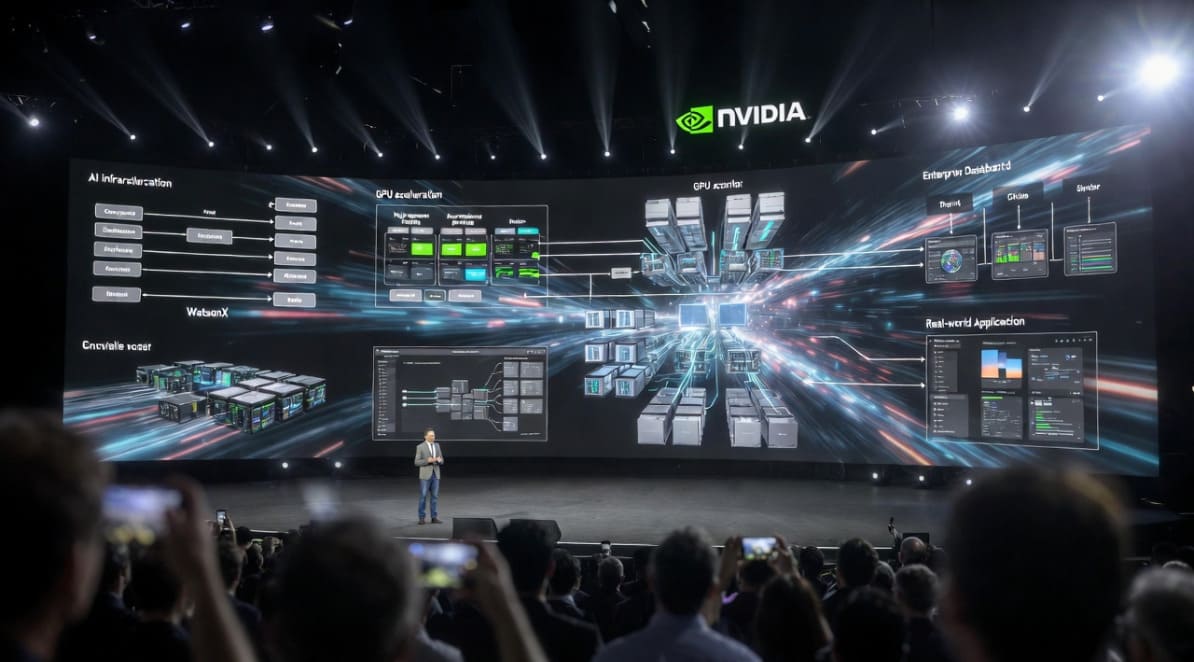

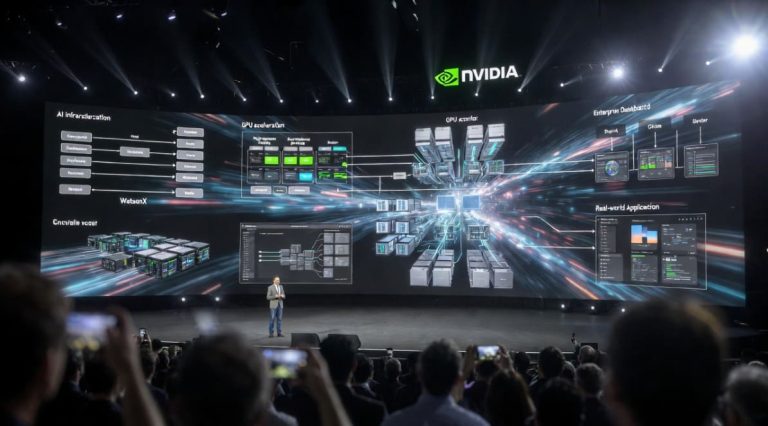

Nvidia GTC is the premier global AI conference. It takes place each year in San Jose and is organized by Nvidia, the world’s largest company with over $4 trillion in market cap. GTC is one of the most closely watched events in technology because Nvidia sits at the centre of the global AI infrastructure buildout.

Jensen Huang, CEO of Nvidia, was the keynote speaker on the first day of the event. His keynote was preceded by a futuristic show highlighting how AI is helping humanity and making a difference. It lasted over 2 hours without any breaks…

Structured Data was one of the key points that Jensen highlighted.

Other highlights of the event included a recent collaboration between IBM and Nvidia. In a video during the event, it highlighted how today the two companies are reinventing data processing for the era of AI. Nvidia GPU computing libraries will be leveraged by IBM’s WatsonX and its SQL Engine. The presentation included a mention of how Nestle is now able to process data multiple times quicker than before thanks to these systems.

It went under the slogan: “accelerated computing for the era of AI.”

Jensen explained how Nvidia accelerates data processing in the cloud, but also on-prem too. The charismatic CEO shared a quote from Abhijit Dubej, CEO of NTT Data which read that “Dell AI Data Platform with NVIDIA helps us build scalable and repeatable data pipelines that drive automation – processing massive data volumes in minutes instead of hours and delivering transformative value for our clients.”

Another quote he shared with the audience was from Saral Jain, CIO of Snap who shared how “our collaboration with NVIDIA and Google Cloud helps us innovate faster for more than a billion Snapchatters worldwide. By lowering costs and scaling experiments across petabytes of data, we’re delivering AI-powered experiences more quickly and efficiently.”

Structured data acceleration

Jensen shared with the audience that “our relationship with cloud service providers are essentially us bringing customers to them. We integrate our libraries, we accelerate workloads, and we land those customers in the clouds.”

He explained how the cloud providers are always looking for Nvidia to pass customers to them. But, from Jensen’s perspective, the issue is that there are so many customers. And there will be more. He suggested that Moore’s Law was now defunct. Tomorrow would be dictated by new technology advances.

Nvidia will be supporting the integration of OpenAI in AWS. AWS was the first cloud partner to Nvidia. Microsoft’s Azure is also a customer. Nvidia’s A100 supercomputer was first installed at Microsoft Azure. The collaboration with cloud partners continued. Google Cloud, Oracle, CoreWeave (the world’s first AI native cloud which works with Europe’s Mistral AI), Jensen even mentioned Dell and Palantir who also started collaborating with Nvidia.

“Accelerated computing is not a chip problem,” Jensen shared. “Accelerated computing is not a systems problem. It just has missing words. Application acceleration are the words missing.”

The concept of CPU has run out of steam, he added. The only way to accelerate applications going forward is through application or domain specific acceleration.

“We are a vertically integrated computing company,” Jensen explained.

He also added later: “What makes us special is that we are an algorithm company.”

Three things happened during the last few years that have changed things forever, Jensen highlighted:

- ChatGPT and Generative AI

- Models and Context 10x and Tokens 10x and Reasoning AI

- Models and Context 100x and Tokens 100x and Agentic AI

Tomorrow? Each year Nvidia keeps growing. With over $1 trillion of AI chip revenue by 2026, the company just doubled its earlier target of $500 billion. If AI is the future, Nvidia is the accelerated computing driver that it will need.

Author: Andy Samu

See Also: