Multiverse Computing, a Spanish AI startup you’ve probably never heard of, just outperformed Mistral on every single benchmark… with a smaller model.

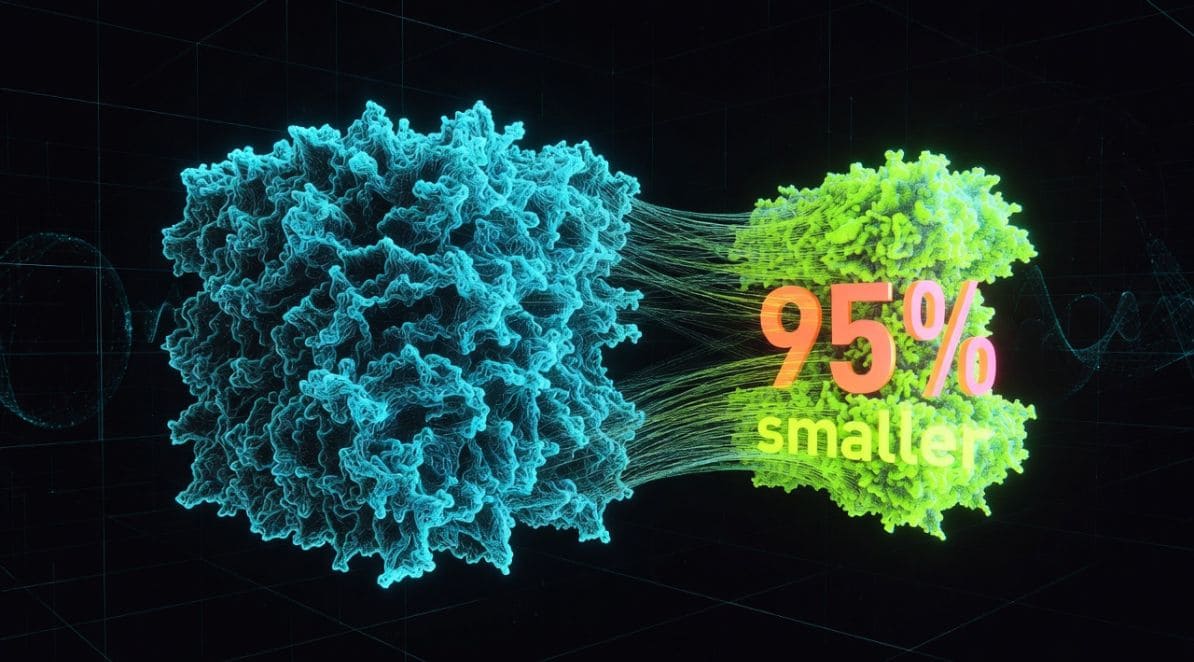

Based in San Sebastian, Multiverse Computing, doesn’t build larger language models. It makes existing ones dramatically smaller (up to 95% smaller) without meaningfully degrading their performance. That quiet edge just became very loud.

Between March 13 and 19, the company launched a self-serve API, locked in an alignment with NVIDIA’s latest model family, and signed two major European partnerships. All of it timed to coincide with NVIDIA GTC 2026, all of it executed in one week.

We previously ranked Multiverse Computing #8 on our list of Europe’s 10 Top AI Startups in 2026. Since then things have changed.

From a WhatsApp Group to Spain’s Biggest AI Fundraise

Multiverse Computing was not born in a boardroom.

In 2017, four people, Enrique Lizaso Olmos, Roman Orus, Alfonso Rubio-Manzanares, and Samuel Mugel, were exchanging ideas in a WhatsApp group about applying quantum computing to financial problems. Two years later, they created Multiverse Computing in Spain.

The founding team is unusually distinguished. Olmos, the CEO, holds a PhD in mathematics and an MBA from IESE, with over 20 years in finance and banking. Orus, Chief Scientific Officer, is an Ikerbasque Research Professor at the Donostia International Physics Center, and his tensor network research is the scientific core of what Multiverse builds. Mugel serves as CTO; Rubio-Manzanares leads marketing and quantum advocacy.

The milestones since have been consistent. The company graduated from Creative Destruction Lab’s quantum program in Toronto in 2020, secured €12.5 million from the European Innovation Council in 2021, and has built over 160 patents, ranking among Spain’s top European patent filers.

CB Insights named Multiverse Computing one of the 100 most promising AI companies in 2023, Digital Europe awarded it “Future Unicorn” statups in 2024, then it received the TechTour Growth Europe Award in 2026.

CompactifAI: The Quantum-Inspired Tech That Compresses LLMs by Up to 95%

Multiverse’s original focus was not large language models. It built quantum and quantum-inspired algorithms designed to solve complex optimization problems across industries such as finance, energy, and manufacturing. The shift toward AI came as a direct extension of that work.

By 2023, Multiverse identified that tensor network methods, originally developed in quantum physics, could be applied to compress large language models without materially degrading their performance.

That insight became CompactifAI, now the company’s flagship product.

CompactifAI uses tensor networks to compress large language models (LLMs) without affecting their accuracy. The company claims it can shrink a model by up to 95% with only a 2–3% drop in performance. The result: compressed models that run 4x to 12x faster and cost 50–80% less to operate than their originals. These aren’t just cloud models either. Ultra-compressed versions can run on phones, PCs, drones, and even a Raspberry Pi. For organizations operating under budget or regulatory constraints, that delta can determine whether deployment is feasible at all.

That positioning, sitting between cutting-edge AI capability and real-world deployment constraints, is exactly why it earned a place among our list of Europe’s 10 Top AI Startups in 2026.

The CompactifAI Self-Serve API Portal That Launched During NVIDIA GTC 2026

Until March 16, access to Multiverse’s compressed models largely ran through the AWS Marketplace. That dependency is now optional.

The new CompactifAI API portal, launched on the opening day of GTC 2026, gives developers and enterprises direct, self-serve access to the company’s model library, alongside real-time token usage tracking.

CEO Olmos was explicit about the intent: the portal now provides developers the “transparency and control needed to run them in production.”

Removing the intermediary matters more than it might appear. Enterprise AI teams have learned that indirect access layers introduce friction, from opaque billing to integration constraints and operational risk. Direct access simplifies deployment and tightens cost control. To accelerate adoption during GTC, Multiverse offered free credits to new users signing up within the event window.

NVIDIA Nemotron-3 Is Coming to CompactifAI — With Real Stakes Attached

On the same day the portal launched, Multiverse announced plans to host NVIDIA’s newly unveiled Nemotron-3 model family within the CompactifAI API, including the multimodal Nemotron-3 Omni model.

The underlying logic is clean. NVIDIA’s multimodal models expand capability. Multiverse’s compression reduces cost. Together, they address one of the core constraints in enterprise AI adoption: the persistent trade-off between performance and infrastructure expense.

Olmos framed the opportunity in operational terms: hosting Nemotron-3 Omni allows organisations to experiment, deploy, and scale generative AI faster across a broader set of use cases.

Yet one caveat remains: no firm timeline has been given for Nemotron-3 availability within CompactifAI. An announcement and a delivery are two different things, and that distinction matters for enterprise teams doing roadmap planning. The integration is worth watching closely, but it shouldn’t be treated as available today.

Benchmark Performance: How Multiverse’s Models Stack Up Against Mistral

The product-level proof is in the benchmarks.

According to Multiverse Computing’s published results, its compressed and optimized OpenAI GPT-OSS-20B BlackStar 12B model, outperforms Mistral AI’s Ministral 14B across every key metric:

- 2× better reasoning performance

- 33% higher intelligence index

- 54% lower memory usage

- 47% higher throughput

- and 32% faster latency.

A 12-billion parameter model outperforming a 14-billion parameter competitor on every operational measure is the core claim.

The gap widens at scale. HyperNova 60B 2602, built on OpenAI’s open-weight GPT-OSS-120B, beats Mistral 3 Large with:

- 1.9× better reasoning

- 92% lower memory usage

- 2.8× higher throughput

- and 65% faster latency, at roughly half the parameter count.

For enterprises calculating compute bills, these numbers reframe the procurement decision. With private credit defaults running at a record 9.2%, and VC firms like Lux Capital now advising companies to get compute capacity commitments in writing, a model that uses 54–92% less memory than a comparable alternative is both a technical and financial advantage.

Axelera AI + Inetum: Building a Real Distribution Stack

The company’s activity around GTC extended beyond NVIDIA. Two partnerships in one week signal commercial intent.

On March 13, Multiverse formalized a strategic alliance with Inetum, a European digital services firm with 27,000 consultants across 19 countries and €2.4 billion in 2024 revenue. Anchored at Inetum’s AMAIA AI Experience Center in Bilbao, the partnership positions Inetum as a primary go-to-market channel for Multiverse’s technology.

Four days later, on March 17, Multiverse announced a technology collaboration with Dutch chip firm Axelera AI, embedding CompactifAI-compressed models directly into Axelera’s Metis™ and upcoming Europa™ edge hardware platforms. The target markets are industrial, mobility, and defense deployments where cloud is either unavailable or heavily regulated, a segment no major US hyperscaler is seriously addressing.

Both partnerships align with a broader European objective: reducing reliance on non-European AI infrastructure. Ekaterina Zaharieva, EU Commissioner for Startups, Research and Innovation, underscored this point, noting that collaborations like these demonstrate how European deep-tech firms can deliver “sovereign strategic digital technologies.”

€215M Raised, 100+ Enterprise Clients, + A €500M Round in the Pipeline

Multiverse closed a $215M (€189M) Series B in June 2025, the largest AI funding round in Spain to date, led by Bullhound Capital, backed by HP Tech Ventures, Toshiba, SETT, Forgepoint Capital, and others.

It now serves over 100 global customers, including the Bank of Canada, Bosch, and Iberdrola. As of February 2026, Bloomberg reported that the company is raising an additional €500 million at a valuation exceeding €1.5 billion.

Can Multiverse Computing Scale? Two Questions Define What Comes Next

Two questions now define Multiverse Computing’s next phase: whether the €500 million funding round closes on expected terms, and whether the Nemotron-3 integration arrives on schedule.

If both materialise, Multiverse transitions from a specialist player into core infrastructure within Europe’s AI stack. If not, March has already shifted the companies position, from a quiet operator to a company setting the pace in efficient AI deployment.

Either way, this is no longer a company you can describe as flying under the radar.

See Also:

Europe’s Top 10 AI Startups 2026: €115B+ Ranked

Jensen Huang’s GTC 2026 Vision: NVIDIA Partnerships Shape AI